Thermodynamic Computing: The Next Leap in AI Efficiency

Introduction

In the race for more efficient, powerful, and sustainable artificial intelligence systems, a new frontier is emerging: thermodynamic computing. This revolutionary concept promises to redefine how we build and operate intelligent machines. By mimicking the fundamental laws of thermodynamics, this paradigm could dramatically reduce energy consumption and unlock new levels of AI efficiency.

In this article, we explore what thermodynamic computing is, how it works, and why it could be the most important advancement in AI since deep learning. Whether you’re a tech enthusiast, a developer, or an AI researcher, understanding this innovation could prepare you for the future of computing.

Table of Contents

What Is Thermodynamic Computing?

Thermodynamic computing is a new computational paradigm that leverages the laws of thermodynamics—particularly entropy and energy dissipation—to process information. Unlike traditional computing, which relies on binary states and deterministic logic gates, thermodynamic computing embraces uncertainty, fluctuation, and energy transfer to reach intelligent outcomes.

This approach has been pioneered by physicists and researchers who believe that understanding the universe’s natural information-processing mechanisms can inspire more efficient machine intelligence.

“We aim to build machines that think more like the universe itself does” — Guillaume Verdon, researcher at Google X

The Science Behind the Concept

At its core, thermodynamic computing is based on the principles of statistical mechanics. Instead of fixed operations, it allows a system to explore many states and settle into those with the lowest energy requirements. These systems mimic annealing, a natural process where systems slowly reduce energy to reach a stable, optimized state.

Thermodynamic systems are not deterministic. They evolve based on probabilities, making them inherently different from digital circuits. This can be incredibly useful in solving complex optimization problems, such as training deep learning models or simulating biological systems.

Why AI Needs Thermodynamic Efficiency

Today’s AI systems require massive energy consumption. Training models like GPT or BERT demands thousands of GPU hours and substantial electricity. Thermodynamic computing offers a radically different approach:

- Reduced energy use: Simulations suggest up to 10x lower power consumption.

- More organic learning: Learning processes mirror real-world systems, enhancing adaptation.

- Unsupervised capabilities: Systems evolve and adapt with minimal human intervention.

These benefits make thermodynamic computing a natural fit for advancing AI beyond its current hardware limits.

Applications in Artificial Intelligence

Thermodynamic computing could impact multiple areas within AI, including:

- Neuromorphic engineering: Building chips that mimic brain-like energy efficiency.

- Quantum-inspired optimization: Solving hard problems faster by leveraging entropy.

- Reinforcement learning: More natural exploration of state spaces.

- Edge AI: Efficient, low-power AI for devices like smartphones and IoT gadgets.

It also opens doors to adaptive systems that continuously learn without retraining from scratch.

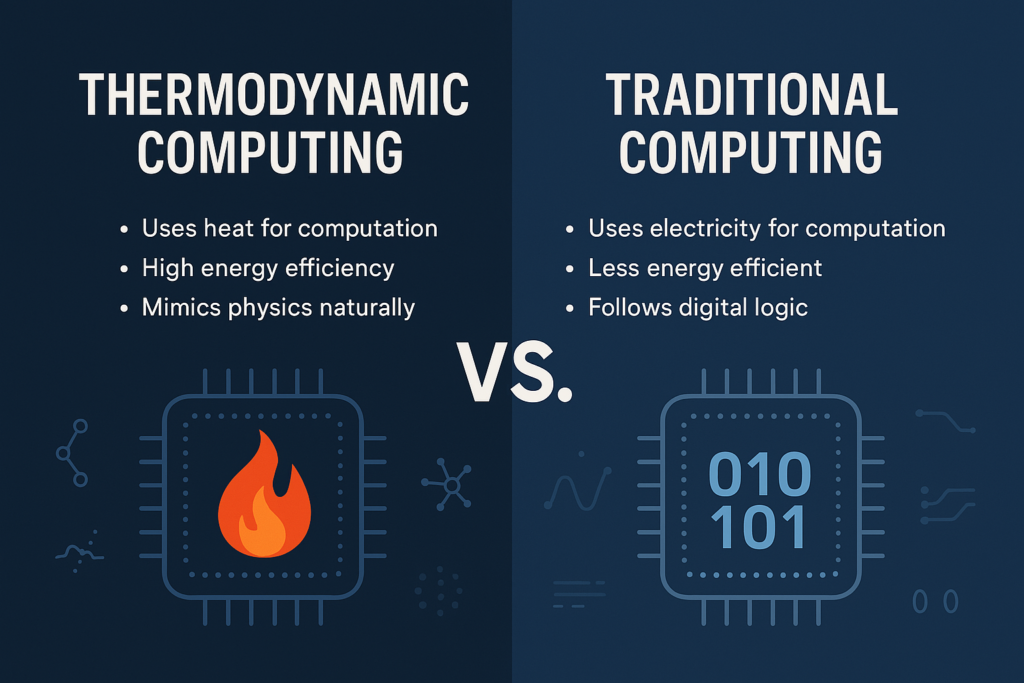

Thermodynamic Computing vs. Traditional Computing

| Feature | Traditional Computing | Thermodynamic Computing |

|---|---|---|

| Logic | Binary, deterministic | Probabilistic, analog |

| Energy efficiency | Moderate to low | High |

| Optimization strategy | Algorithmic | Energy minimization |

| Scalability for AI | Hardware-limited | Thermodynamically scalable |

| Suitability for complex AI | Increasingly strained | Naturally adaptive |

Challenges and Limitations

Despite its promise, thermodynamic computing is not without hurdles:

- Hardware development: Specialized chips are still in early stages.

- Noise and unpredictability: Probabilistic systems are harder to control.

- Lack of standardization: No agreed-upon frameworks or protocols yet.

However, these are typical growing pains of any revolutionary tech—similar challenges once plagued quantum computing and neuromorphic chips.

Future Outlook

With growing interest from tech giants and academic institutions, thermodynamic computing may be integrated into AI accelerators and hybrid chips within the next 5-10 years. Google, MIT, and DARPA are already exploring related technologies.

Expect future developments such as:

- Commercial chipsets for energy-efficient AI

- AI training platforms using entropy-based learning

- Software frameworks for thermodynamic algorithms

Final Thoughts

Thermodynamic computing represents an exciting new direction for AI development. By learning from the fundamental physics of our universe, researchers are crafting machines that may soon outpace current AI models in both power and sustainability.

If you’re interested in the future of artificial intelligence, keeping an eye on thermodynamic computing isn’t just smart—it’s essential.

👉 Want more content like this ? Subscribe to Encyclotech for more AI insights!